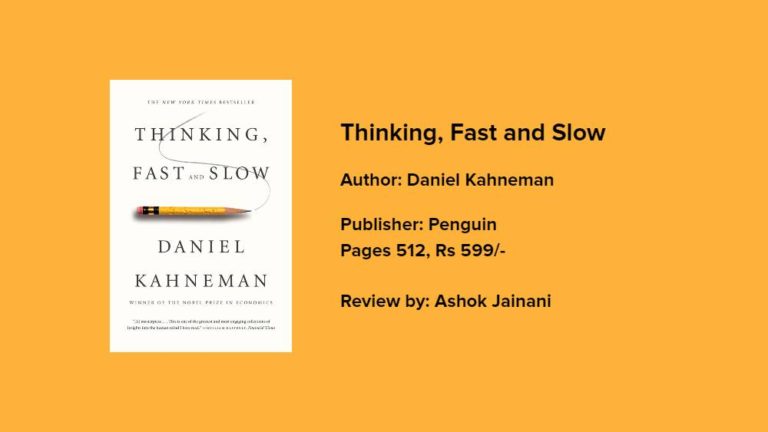

A human being is a complex dynamic system. Reading this book will transform the way you think about thinking. It exposes the extraordinary capabilities — and also the faults and biases — of thinking to reveal the pervasive influence of intuitive impressions on our thoughts and behavior.

Overconfidence is the leitmotif of this book by Daniel Kahneman, 2002 Winner of Nobel in economic science. All of us, including the experts, are prone to an exaggerated sense of how well we understand the world. He takes the reader on a tour of the mind and explains the two systems that drive the way we think. System-1 is our fast, automatic, intuitive, and largely unconscious mode; System-2 is our slow, deliberate, analytical, and consciously effortful mode of reasoning about the world.

The psychologists discovered “systematic errors in the thinking of normal people”: errors arising not from the corrupting effects of emotion but built into our evolved cognitive machinery, that according to Kahneman, is the source of many of the biases that infect our thinking. System-1 jumps to an intuitive conclusion based on a “heuristic” — an easy but imperfect way of answering hard questions — and System-2 lazily endorses this heuristic answer without bothering to scrutinize whether it is logical. Are they different agents in our head? Not really. Kahneman says these are “useful fictions” because they help explain the quirks of the human mind.

We’re astonishingly susceptible to being influenced, or puppeted, by our surroundings in ways we don’t suspect. Our self-ignorance extends beyond the details of the two-system approach to judgment and choice.

We hugely underestimate the role of chance in life. There is a powerful illusion that sustains fund managers in their belief their results, when good, are the result of skill, not luck.

From the Book

“One famous experiment centered on a NY City phone booth. Each time a person came out of the booth after having made a call, an accident was staged – someone dropped all her papers on the pavement. Sometimes a dime had been placed in the phone booth, sometimes not. If there was no dime, only 4% of the exiting callers helped to pick up the papers. If there was a dime, no fewer than 88% helped.”

“Jumping to conclusions is efficient if the conclusions are likely to be correct and the costs of an occasional mistake acceptable, and if the jump saves much time and effort. Jumping to conclusions is risky when the situation is unfamiliar, the stakes are high, and there is no time to collect more information. These are the circumstances in which intuitive errors are probable.”

“The tendency to like, or dislike, everything about a person — including things you have not observed — is known as the ‘halo effect,’ a common bias that plays a large role in shaping our view of people and situations.”

“The amount and quality of data on which the story is based are largely irrelevant. When information is scarce, a common occurrence, System-1 operates as a machine for jumping to conclusions.”

“Extreme predictions and a willingness to predict rare events from weak evidence are both manifestations of System-1…It is natural for System-1 to generate overconfident judgments because confidence is determined by the coherence of the best story you can tell from the evidence at hand. Be warned: your intuitions will deliver predictions that are too extreme and you will be inclined to put far too much faith in them.”

“There was a great deal of skill in the Google story, but luck played a more important role in the actual event than it does in the telling of it. And the more luck was involved, the less there is to be learned.”